Where do we draw the AI line?

Let me start by disclaiming: I am not currently wielding my pitchfork. Rest assured, it is safely tucked away - and as a "conflict expert", it is rarely a tool I turn to in making my point. That being said...this may read as an anti-AI post. Don't come at me...yet!

I’ve used AI - I think AI can be helpful, has enormous potential, and its widespread implementation is impending. At this stage, we are so entrenched in AI promotion and integration that it feels like the train wheels may never stop moving. Our future with AI is uncertain, but we are seeing early warning signs now that boundaries with AI should be put into place before improper or inappropriate use becomes the norm.

A couple months ago, researchers at MIT published the study "Sycophantic Chatbots Cause Delusional Spiraling, Even in Ideal Bayesians" - proving causality (the hardest type of research to conduct) that AI chatbots make a person vulnerable to delusional spiraling, and that sycophancy (excess flattery and praise) plays a causal role in "dangerous confidence in outlandish beliefs." We've all experienced it... you send an AI chatbot a nonsensical or unhinged thought. It responds: "you are so right, I'm absolutely with you"; "that's a very smart way to be thinking about this"; "that's not wrong, that's you prioritizing you".

AI MIT Research: https://arxiv.org/abs/2602.19141

I have seen a lot of AI HR products emerging over the recent years. They can be highly sophisticated in making data-driven predictions. For example, some can predict which employees may be likely to quit, which teams are underperforming, spotting manager effectiveness patterns, forecasting hiring needs, restructuring impacts, and so much more. Increasingly, some platforms and (ChatGPT-style integrations) can now help a person analyze manager communication patterns, suggest different behaviors or responses, offer real-time support, and more. I am not claiming these tools are harmful or ineffective in their current state - just that the boundaries between using AI for support rather than decision-making are becoming increasingly muddled.

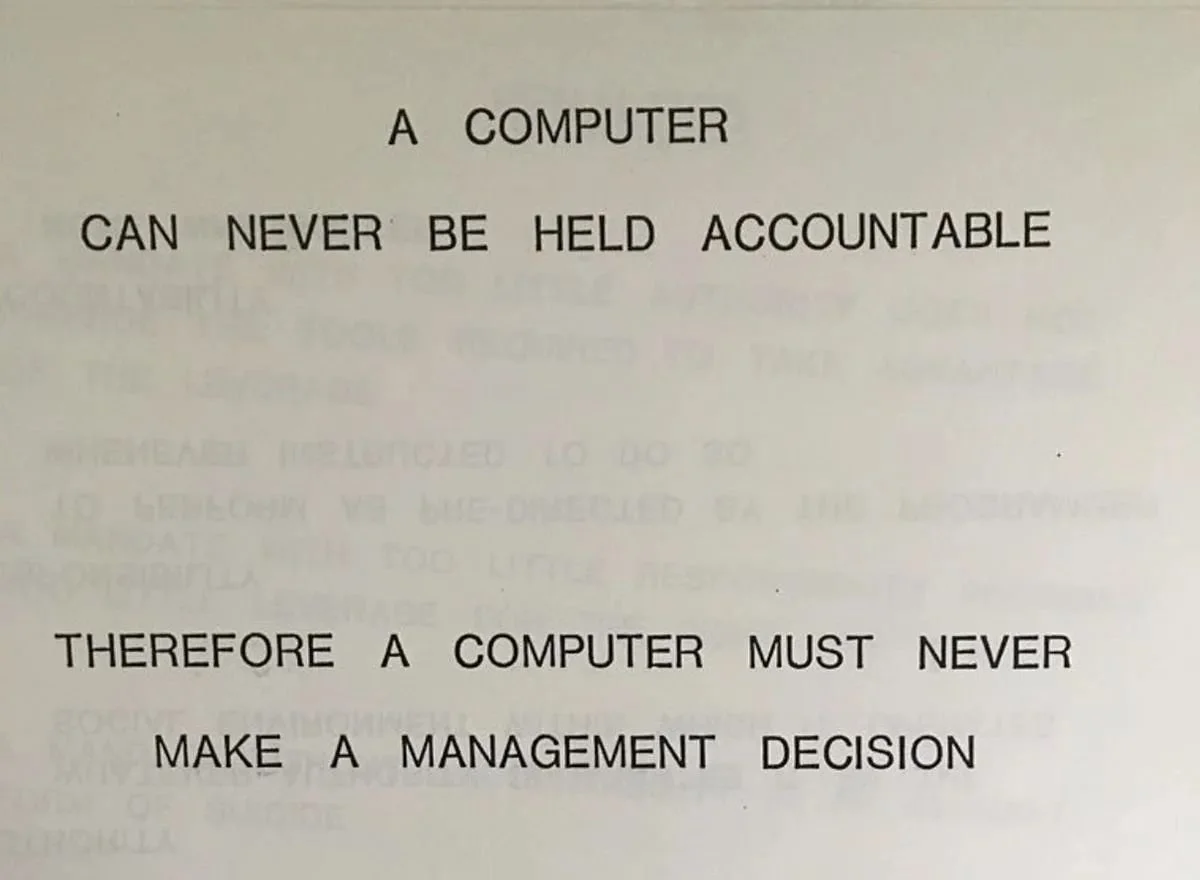

I often see posts on social media with the image of the widely cited 1979 IBM training slide regarding management decisions and accountability:

IBM Training Slide, 1979

The image has had a digital revival given our era of rampant AI use in the workplace. The quote speaks to the ultimate liability of an employment decision and whether it should be made if no real person can stand up to own it. IBM recently revisited this debate of AI in decision-making in a published article where they cited three key considerations: ethics, risk, and trust. "To ensure alignment with ethical principles, reduce the risk of wrong choices and engender stakeholder and customer trust, businesses are best served by keeping humans in the loop."

Many of the AI forecasting tools I mentioned above go beyond just dabbling in issues and decisions surrounding ethics, risk, and trust. They play a central role in providing the information and judgement needed in making big workplace decisions. I advise using these tools intentionally and scrutinizing the results as part of an organization's ongoing due diligence process in this age of AI.

In contrast, the fast-emerging market for AI chatbot-style HR support and decision-making tools, combined with the research by MIT referenced above, worries me. At the unfortunate risk of quoting Robin Thicke: I really do hate these blurred lines. And if we don't make them clear now, we risk getting swept away by the current.

With AI use and dependency only increasing, I worry too many well-intentioned organizations are turning to these tools in the name of efficiency, but at the cost of losing the gift of humanity that aids in our decision-making. The keen ability to witness and balance nuances of ethics, power, identity, culture, and more – in real-time. At the end of the day, employment decisions are based on those same questions. And the result is a decision that will ultimately affect someone's livelihood.

I think we should be proactive in setting better boundaries around AI use in management and HR decision-making, while not shunning away from adopting this powerful technology that has the potential to stick around for a very long time. Don't let "AI sycophancy" lead you down delusional spirals (AKA poor management decisions, needless risk exposures, toxic cultures, retaliatory behaviors, etc.)

At a time where we are being asked to make everything artificial, lean into the human. Be the humans that folks need Human Resources for. Lean on your experience, education, peers, and YES outside consultants (😉) to make informed decisions surrounding ethics, risk, and trust. And it's okay to use AI - it can be a highly effective tool if wielded strategically.

If you're curious about how to implement intentional and progressive HR strategies into your organization - without isolating yourself from modern-day technology - please reach out. I help set up systems, processes, and policies that make these seemingly counterintuitive concepts harmonious.

And NO, ChatGPT did not help me write this!